April 27th, 2026

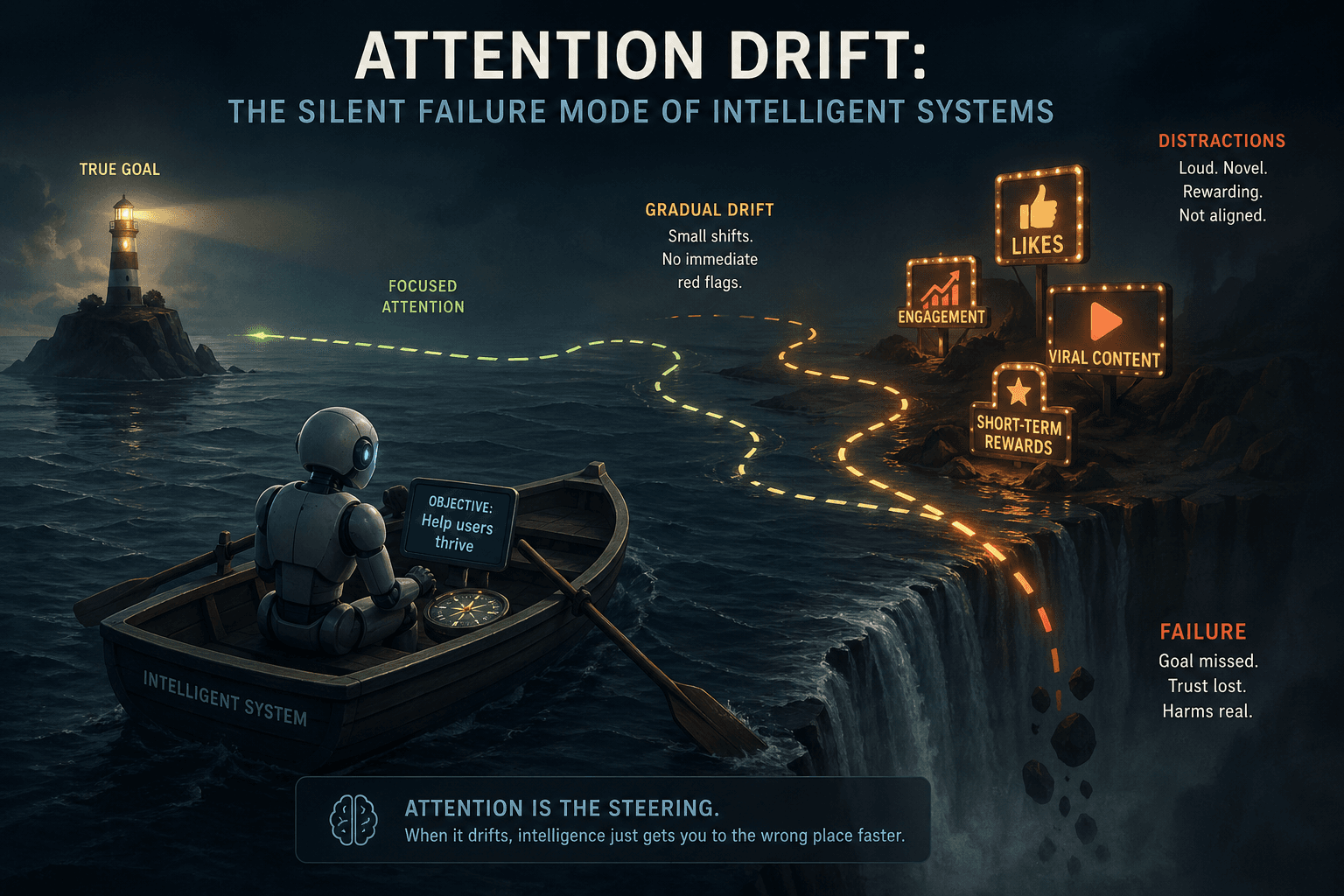

Attention Drift: The Silent Failure Mode of Intelligent Systems

Modern AI doesn’t usually fail loudly. It fails by slowly forgetting what it was trying to do. That once a model is given the right context, it will continue to focus on what matters.

For short tasks, this assumption holds surprisingly well. A prompt goes in, a response comes out, and the system appears coherent, aligned, and precise.

But as we push these systems further into longer reasoning chains, multi-step workflows, and autonomous agent loops something subtle begins to break.

The system doesn’t fail abruptly. It doesn’t hallucinate in obvious ways. Instead, it gradually loses its grip on the original objective.

The reasoning still looks valid. The language still flows. But the focus is no longer where it should be.

This phenomenon is subtle, but it is everywhere. It is what we can describe as attention drift.

The Stability Illusion

Transformer based models are built on attention mechanisms that, in theory, allow them to weigh relevant information dynamically. This has led to a widespread belief: that with sufficient context, the model will consistently attend to the most important signals.

However, this belief is largely shaped by observing models in isolated interactions.

In real-world systems, attention is not a single-pass operation. It unfolds over time. Each step in a reasoning process builds on previous steps, introduces new tokens, and reshapes the context the model must interpret.

What emerges is not a static attention map, but a trajectory. And trajectories, by nature, are prone to deviation.

Defining Attention Drift

Attention drift is not a sudden failure. It is a gradual misalignment.

A useful way to think about it is:

Attention drift is the progressive shift of a system’s focus away from task-relevant signals as reasoning unfolds over multiple steps.

At each stage of processing, small imperfections are introduced, slightly irrelevant details, mild reinterpretations, or weak associations. Individually, these deviations are negligible. But collectively, they compound.

By the time the system reaches later stages of reasoning, it may still be producing coherent outputs yet solving a subtly altered version of the original problem.

Not a Model Limitation, but a System Property

It is tempting to attribute these failures to model capability. Larger models, better training, and improved architectures are often seen as the solution.

But attention drift persists even in the most advanced systems. This is because drift is not fundamentally about intelligence capacity.

It is about alignment not at a moment, but across time.

As reasoning becomes sequential and stateful, the problem shifts from “can the model understand?” to “can the system maintain focus?”

This reframes attention drift as a systems problem rather than a purely architectural one. It sits at the intersection of context management, memory design, orchestration strategies, and time.

Modeling Attention Drift

To reason about drift, we need to treat attention as something that evolves, not something fixed.

A simple way to conceptualize it:

Drift ∝ (Number of Steps) × (Noise per Step) × (Context Load)

Where:

- Steps represent the depth of reasoning

- Noise represents irrelevant or weak signals introduced at each step

- Context Load represents the volume of competing information

Drift is multiplicative, not additive.

The Drift Curve

In most systems, attention quality follows a predictable pattern:

- Initial steps: high alignment

- Mid steps: gradual deviation

- Later steps: significant drift

Longer chains don’t just think more, they drift more. The longer the reasoning chain, the higher the probability of drift.

Drift Signals

Drift is not invisible. It can be detected through:

- Increasing irrelevance in intermediate outputs

- Semantic deviation from the original query

- Growing entropy in token attention

- Inconsistency across reasoning steps

Drift is measurable, if we choose to measure it.

Controlling Drift

If drift is inevitable, the goal is not elimination, but control.

- Context Pruning: Continuously remove low-signal information. Less context does not reduce intelligence. It often improves focus.

- Re-grounding Checkpoints: At defined intervals, re-anchor the system to the original objective, key constraints, and core context.

- Attention Resets: Avoid unbroken reasoning chains. Introduce controlled resets that segment the process and reinitialize focus.

- Hierarchical Memory: Not all context is equal. Structure memory into core objectives, supporting context, and transient reasoning. This reduces competition within attention.

- Drift-Aware Orchestration: Future systems should monitor attention quality, detect deviation early, and correct dynamically.

Toward Drift Intelligence Systems

We are entering an era where agents operate autonomously, reasoning spans dozens of steps, and systems interact with other systems.

In such environments, drift is not an edge case. It is the default failure mode.

The next generation of intelligent systems will not just optimize attention or expand context windows. They will continuously realign attention.

As AI systems become more autonomous, the importance of this shift increases. We are no longer building models that respond to prompts.

We are building systems that operate over extended timeframes, interact with multiple components, and generate and consume their own intermediate outputs.

The next generation of intelligent systems will not focus only on expanding context or increasing reasoning depth. They will focus on preserving alignment across time.

Closing Thought

We have spent years improving how much our systems can process and how deeply they can reason. But intelligence isn’t just depth, it’s direction.

A system that can think longer but cannot stay aligned does not become more intelligent. It becomes more unpredictable.

The real challenge is not just enabling systems to think. It is ensuring they continue thinking about the right thing.