March 9th, 2026

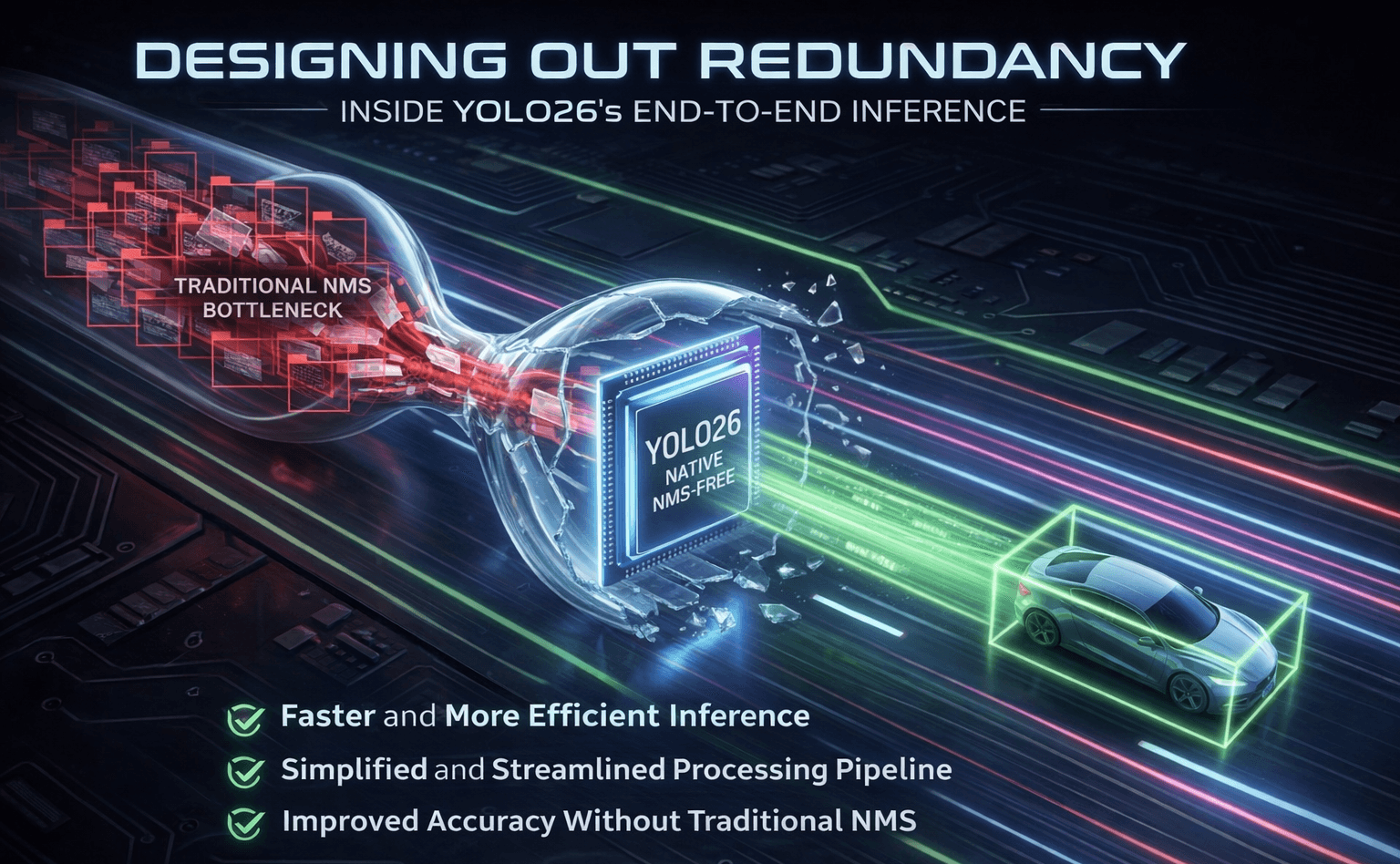

Designing Out Redundancy: Inside YOLO26’s End-to-End Inference

Every millisecond of inference latency has a cost. Computer vision has quietly become a core capability across modern industries. From manufacturing automation to retail analytics and robotics, vision models are now making real-time decisions that influence operational outcomes.

Among the many detection architectures developed over the years, YOLO (You Only Look Once) has consistently stood out for one reason: it works extremely well in the real world.

While many research models focus on pushing benchmark scores, YOLO models have historically prioritized something more practical: speed, simplicity, and deployability.

With YOLO26, that philosophy evolves again. But this time, the change isn’t just about performance improvements.

It’s about rethinking the structure of object detection itself.

Why YOLO Became the Industry Favorite

YOLO’s rise in computer vision was not accidental. It solved several problems that engineers repeatedly encounter when building real systems.

- Speed: YOLO processes images in a single pass, enabling real-time detection in video streams and edge devices.

- Simplicity: Its unified architecture reduces the complexity of training and deployment pipelines.

- Deployment Flexibility: YOLO models export smoothly to formats like ONNX, TensorRT, TFLite, and CoreML, making them adaptable across different hardware environments.

- Scalability: The same architecture can run on edge devices, enterprise servers, or large cloud inference systems.

Because of these qualities, YOLO became one of the most widely deployed object detection frameworks across industries.

But even YOLO models carried a hidden inefficiency.

The Hidden Inefficiency in Object Detection

Most object detection systems don’t predict a single bounding box for each object.

Instead, they generate multiple overlapping predictions for the same object. Each prediction has its own confidence score.

This redundancy occurs because models make predictions across grids, anchors, and multiple feature scales.

The result is a pipeline that looks like this:

Traditional Detection Pipeline

Image → Model → Many Bounding Boxes → Non-Maximum Suppression (NMS) → Final Detections

NMS acts as a filtering step. It sorts predictions by confidence and removes overlapping boxes using IoU thresholds until only the best one remains.

For years, NMS worked well enough that engineers accepted it as the cost of doing business. But it was always duct tape, The model produced redundant predictions, and post-processing had to clean them up afterward.

Preventing Redundancy Instead of Removing It

YOLO26 takes a different path. Instead of generating multiple predictions and filtering them later, the model is trained to produce only one prediction per object.

This changes the inference pipeline dramatically.

Previous Pipeline

Image → Model → Many Boxes → NMS → Final Detections

YOLO26 Pipeline

Image → Model → Final Detections

No suppression step. No extra filtering layer.

The model learns to make decisive predictions directly.

In other words, redundancy is no longer removed after inference, it is designed out of the model itself.

How YOLO26 Makes This Possible

Training a model to produce exactly one prediction per object is harder than it sounds.

Naive approaches collapse during training with only one positive sample per object, gradient signals are too sparse for the network to learn stable localization.

Earlier attempts struggled because restricting predictions too early makes training unstable. With fewer positive samples, models often fail to learn strong localization.

YOLO26 addresses this using a dual-head training strategy.

During training, the model uses two detection heads:

Dense Training Head

This head behaves like traditional detectors. It assigns multiple predictions to each object, producing dense training signals that help the network learn robust spatial features.

One-to-One Prediction Head

This head assigns exactly one prediction to each object and learns the decisive output behavior used during inference. Both heads share the same backbone and are aligned during training.

Once training is complete, the dense head is removed.

The model retains only the one-to-one prediction head, allowing it to output final detections directly without requiring NMS.

Think of it as a teacher-student dynamic: the dense head provides a rich learning signal, while the one-to-one head learns to commit.

A Simpler and Faster Inference Pipeline

YOLO26 also simplifies another component commonly used in modern detectors: Distribution Focal Loss (DFL).

While DFL improves bounding box precision, it introduces extra decoding operations such as softmax calculations and integral transformations.

On GPUs this overhead is small, but on CPUs and edge hardware, these steps can add 10-30% overhead depending on hardware, a meaningful penalty for battery-constrained or real-time systems.

YOLO26 removes DFL entirely and replaces it with a direct regression head.

This reduces computational complexity and improves inference efficiency, especially on edge devices.

In practical terms, the model becomes:

- simpler to export

- lighter to run on CPUs

- faster for real-time edge inference

Beyond Object Detection

YOLO26 is designed as a multi-task vision architecture. In addition to object detection, it supports several other computer vision capabilities:

- Instance Segmentation

- Image Classification

- Pose Estimation

- Oriented Object Detection

This flexibility allows the same framework to power applications ranging from robotics perception to industrial inspection systems.

Closing the Gap Between Training and Deployment

One of the long-standing challenges in production AI systems is the gap between training pipelines and deployed models.

Many detection systems rely on external post-processing steps such as custom NMS implementations, hardware-specific plugins, or application-side filtering.

These extra components often introduce deployment complexity and inconsistencies. YOLO26 simplifies this workflow.

The model becomes a self-contained inference graph:

What you train is what you export is what you deploy.

For engineering teams, this alignment significantly reduces debugging effort and deployment friction.

The M37Labs Perspective

At M37Labs, we evaluate AI architectures not only by benchmark accuracy but by their enterprise deployability.

Production AI systems succeed when they minimize complexity, reduce infrastructure friction, and behave predictably across environments.

From that perspective, YOLO26 represents an important shift.

It moves object detection away from post-processing heuristics and toward architectural clarity.

For enterprise systems, that matters because:

- simpler pipelines reduce engineering overhead

- self-contained models improve deployment stability

- edge inference becomes significantly more viable

In other words, the value is not only faster detection.

It’s simpler systems that are easier to operate at scale.

The Future of Object Detection

Object detection systems have historically relied on a layered pipeline: prediction, filtering, and correction.

But as detection models mature, the focus is shifting.

The next breakthroughs are not just about faster models, they are about simpler systems.

YOLO26 reflects this change in mindset. Instead of improving performance by stacking additional modules, it simplifies the detection pipeline by removing steps that were never meant to be permanent.

NMS, once considered essential, now becomes optional. Post-processing begins to disappear.

In many ways, this signals a broader transition in AI system design: from performance optimization to architectural refinement.

And in that evolution, YOLO26 marks an important milestone.

It’s learning to get them right the first time.